The Real Bottleneck Nobody Talks About

Most of the time CRO focuses on test results, win rates, uplift percentages, and revenue impact. But there isn’t a lot of talk about what happens before the test launches, and that's where the real time sink happens.

Here's the shocking reality: approximately 70% of time spent on experiments is actually spent on the research phase. We’re talking about analyzing funnels, reviewing KPIs, combining quantitative data with qualitative behavior insights, reviewing session recordings, combing through survey responses, and trying to piece together, not only what’s actually happening, but why.

This is an essential phase that you can't skip. But, it’s also the point where most experiments slow down to a crawl.

The problem isn't how long the research takes. The problem is that turning research into a clear and testable hypothesis can take even longer. You've got scroll data, heatmaps, survey feedback, multiple analytics dashboards, and session recordings pointing at several different things. Synthesizing all of that data into one clear and coherent direction, validating it, and then finally translating it into something that can actually be built, all requires review and refinement.

And while that is all happening, your opportunity cost keeps compounding. Every week that goes by without launching your test is another week that potential revenue continues to slip through the cracks.

What Actually Changed in Our Process

AI definitely didn't replace our CRO specialists. Instead, we’ve armed them with better tools to move faster through the more time consuming parts of the process.

Structuring Research and Insights

For what used to take hours, AI can now interpret data and find gaps in a fraction of the time, and more thoroughly. We also use it to organize our findings, group similar issues, and suggest potential explanations, and help direct the hypothesis much faster than the way we used to do it.

But we kept the most critical part, where our experts still review the output. We still have humans validating whether the conclusions AI gathered actually match the data. They filter what's relevant, set aside weak assumptions, and still make the final decision on what's worth testing.

We’ve applied AI in a way that accelerates our process, while maintaining the quality of our hypothesis. Each idea is expanded on, thoroughly challenged, and properly assessed before we test. We maintain the same standard we’ve always applied in our previous manual process, but now we get there faster.

"AI didn't change the role of testing," says Thor Fernandes, Head of CRO at PurpleFire. "It changed how fast you can arrive at a strong hypothesis."

Generating a Hypothesis

Before, we could typically produce around 15 testable ideas from one research cycle. But now, with AI to help connect data points and turn them into valuable insights, we're consistently generating twice that amount in about the same timeframe.

Think of it this way: more hypotheses means more angles explored. That’s more potential root causes considered, and much better odds of pinpointing the change needed to actually move the needle in the right direction.

"The more angles you explore, the stronger your hypothesis becomes," Fernandes notes.

Developing a Wireframe

After settling on a hypothesis, you’ll need to put it into a wireframe, allowing you to see what changes are needed on the page based on the user problem your research identified. The wireframe lets you lay out the exact changes required for the test, including structure, content, and element behavior, making sure your original idea is clearly defined before moving ahead.

We now utilize AI to dramatically speed up our wireframe creation, putting together more complete versions in much less time. A batch of wireframes that used to take around one week can now be done in two days.

With high-quality wireframes ready to go, our design team can refine them to meet the exact needs of the brands we work with much more efficiently.

The Numbers Behind the Shift

We can see the impact throughout every stage of our process:

Hypothesis pipelines that previously contained around 15 ideas now regularly exceed 30 per research cycle

Wireframe development for monthly testing roadmaps dropped from approximately one week to 1-2 days

Landing page projects (research, ideation, and wireframe phases) reduced from around 2 weeks to 1 week

Full CRO audits that previously took around 4 weeks can now be done thoroughly in less than 2 weeks

These aren't theoretical improvements. They're actually happening, while this is being written, in active client programs right now.

"The real cost in CRO is not running a bad test," Fernandes explains. "It's taking too long to run a good one."

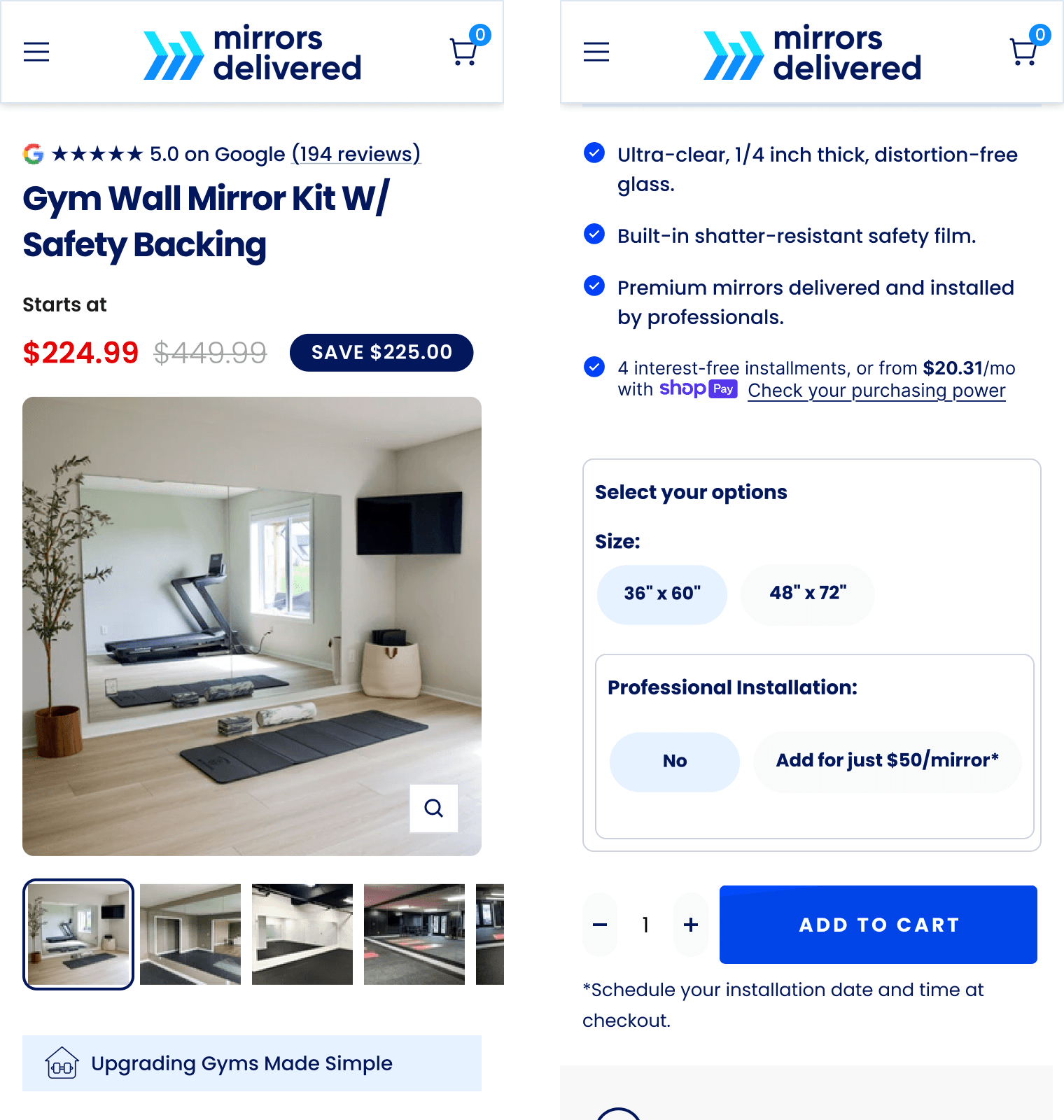

Case Study: Mirrors Delivered

To better explain how this plays out in real life, here’s a peek at a recent project for Mirrors Delivered, an e-comm brand that sells gym mirrors with professional installation.

The Problem

The Brand’s product page required users to fill out a configuration form before making their purchase. They had to define specifications which included size, installation details, channel finish, color, and other custom options.

The data we pulled showed us a clear picture: while scroll rates were strong, with around 75% of users making it to the end of the form, interaction within the form itself stayed low. Users simply scrolled without actually engaging with it.

The heatmap clearly confirmed this pattern. Very few areas showed much engagement in the different fields. Users made it to the form, but they just weren't completing it.

The Diagnosis

We combined quantitative data, qualitative behavior analysis, and direct user input to get a better understanding of where users were dropping off, and more importantly, why.

The core issue? The imagery and layout didn't clearly communicate product quality, and it wasn’t helping users understand the choices they were being asked to make. Instead of reducing uncertainty, the form process itself was creating it.

The Solution

Using AI to structure our findings and speedup the ideation phase, we made the process more intuitive with a page redesign. We supported selection options with visual references that clearly illustrated how each choice affects the final result.

Now users are able to see what they're getting before they commit. This change not only made it easier to configure the product correctly, but it also reduced the uncertainty the previous version created.

Why This Matters

The entire research, ideation, and design phase was completed within just one week, without compromising the depth of analysis or the quality of the output.

This approach doesn't depend on the product itself. Instead, it depends on how the problem is identified and translated into the needed changes. We clearly defined the problem, used multiple data sources to validate it, and made sure our solution directly addressed the real cause of friction.

The same process can be applied wherever user decisions depend heavily on clarity and trust.

What This Means for E-Commerce Performance

When a CRO specialist moves faster from problem identification to launching their test, the sooner you’ll see noticeable improvements to the add-to-cart rate, conversion rate, and revenue per visitor, instead of prolonging it by lengthy analysis and ideation cycles.

Reducing the time between identifying an opportunity and then taking appropriate action, allows you to capture performance gains earlier and more consistently. This shift toward AI assisted optimization represents the coming fundamental change in how conversion rate optimization will work in the future, where data-driven decision making will start happening in realtime, rather than weeks.

"Data doesn't tell you what to do," Fernandes says. "It tells you what needs to be understood."

The brands benefitting from the biggest gains right now aren't just the ones with massive testing budgets. They're the ones who have figured out how to save time between insight and action without cutting back on effectiveness or quality.

Tactical Applications You Can Use

While this isn’t a step-by-step tutorial, we’ll share a few key principles from our process that can help you you improve your experimentation program:

✔ Structure messy research into clear problem statements

After running heatmaps, session recordings, and surveys, organize all findings into clear problem statements grouped by funnel stage. This transforms scattered observations into prioritized, actionable issues.

✔ Generate multiple hypothesis directions before committing

Most teams test the first idea that comes to mind. Instead, wait until you have at least three to five different hypothesis directions per problem, each based on a different potential root cause. You'll find angles you and your team wouldn't have otherwise even considered.

✔ Challenge assumptions before finalizing any hypothesis

List every possible cause of the problem before writing a hypothesis. What else could be causing this behavior? What are we assuming that might not be true?

✔ Apply a specificity check to every hypothesis

A strong hypothesis has three parts: the change, the expected behavior shift, and the reason why. If your hypothesis is too vague, you’re more likely to produce ambiguous results that teach you nothing.

✔ Create wireframe briefs that eliminate interpretation

Skip the gap between "we have a hypothesis" and "design has no idea what we want." Document the layout, what elements are changing, what needs to stay the same, and what should be different about the user experience.

✔ Argue against your own ideas before prioritizing them

What are the strongest reasons this test might produce misleading results, or fail altogether? Find measurement risks, segment issues, and confounding variables before you waste time and effort on a test without knowing everything you need to know first.

✔ Log learnings in a reusable format

After every test, document what you tested, what the results were, what you believe the reason was, and what it might imply for future tests. All of that knowledge should be able to compound over time, not hidden in slides nobody sees.

The Expertise Behind the Efficiency

It’s important we’re clear about one thing: AI does not run our experimentation program. Our CRO specialists have full control.

AI is the tool we’ve harnessed to effectively speed up the parts of the process that are able to scale well with automation, including data interpretation, pattern recognition, hypothesis brainstorming, and wireframe drafting. But deciding which problems really matter most, validating whether conclusions are realistic, and translating our revealed insights into effective test designs still requires human judgment and expertise.

The brands we work with aren't getting better results because we use AI. Those better results come from the process we’ve built that systematically removes the friction between insight and action. AI just happens to be one of the tools, albeit a powerful one, that makes that process much more efficient. The balance we’re seeing between human expertise assisted by AI is shedding light on a broader shift happening across the CRO industry, where the most effective skilled CRO specialists are finding new ways to partially automate lengthy tasks without ceding strategic control.

Expertise drives results. AI supports and enhances that expertise. This is an important distinction.

The Path Forward

The experimentation programs that are winning aren't the ones running the most tests. They're the ones running the right tests, and doing it faster.

Every week stalled in analysis paralysis is a week of potential improvement that never comes to fruition. Every hypothesis that gets backlogged waiting for wireframes is an opportunity cost the business unknowingly absorbs.

We've found an effective way to significantly compress our process, with research cycles taking days rather than weeks. Hypothesis pipelines that moved at a snail’s pace now run full speed with tested, validated directions. And at that faster pace, the quality of what we're testing still hasn't dropped. In fact, it's improved, because we have time to explore more angles and challenge more assumptions before committing resources than we could before.

This evolution in how CRO teams operate is an obvious strategic shift in how optimization programs are being structured. And now, speed and quality are no longer trade-offs.

It’s a safe bet that your funnel is probably losing revenue right now. And the question isn't whether opportunities exist, because they definitely do. The question is how fast are you able to identify them, validate them, and take the appropriate action.

The gap between insight and action is where performance improvement either happens or doesn’t. Once you close that gap, everything else will follow.